Let us image… you have found your spark, you have explored

the market space and found a problem worth solving, you now even have part of

the product that may solve that problem. Your objective is to make the product

the best thing for solving that problem. You have been working on this for

months maybe even a year or more. The product passes all of your automated test

but how do you know customers will actually be able to use it to solve their

problem? When you think about how your product works you view it as a clear

path to success, similar to the image below.

You enter some information, tweak this, change that, press a

button and taa-dah, the problem is solved! Unfortunately, we are often blinded

by our closeness to the product. What our users often see is similar to the

image below. A bewildering array of choices, with no clear path forward.

How can we show them the path? This is where Observational Testing

comes in. Observational Testing allows us to understand the pains of our user

allowing us to remove those pains and improve our product.

On Metacritic.com Half life 2 is the highest rated PC game

of all time; Half life 1 comes in at #4. Both games are made by Valve

corporation. One of the key practices that Valve used to take their games from

mediocre to great is Observational Testing. They call it Play Testing. Valve

would get in volunteers to sit and play their partially finished game, while

members of the team would observe them and take notes. The team was not allowed

to say anything to the player.

Quoting from Ken Birdwell a senior designer there: “Nothing

is quite so humbling as being forced to watch in silence as some poor

play-tester stumbles around your level for 20 minutes, unable to figure out the

"obvious" answer that you now realize is completely arbitrary and

impossible to figure out.”

A two-hour play test would result in 100 or so "action

items" — things that needed to be fixed, changed, added, or deleted from

the game. That is a phenomenal amount of feedback.

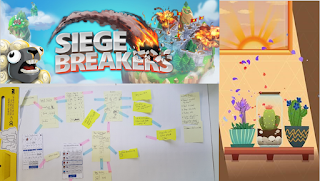

I personally ran many observational tests when developing

prototype games “Planty”, “Bargain Variety Store” & “Siege Breakers”, at

Halfbrick Studios. I can tell you that observational tests are easy to run,

horribly painful and immensely beneficial all at once. That hair pulling

frustration of the user seeing a forest of trees while you see a clear path

really pushes you to improve your product.

Running an Observational Test is straight forward:

- Bring

in a customer or potential customer. This bit is hard.

- Provide

them an objective to achieve in the test, either verbally or written out.

This could be a hypothesis you want to test.

- While

they attempt to achieve the objective, video record over their shoulder (a

smart phone will do just fine).

- Observe

what they do/don’t do; while not saying anything or offering any guidance.

This is the hard part.

- Afterwards

ask what they were thinking at key steps (i.e. when they got stuck, when

they achieved success).

Observational Testing is how you can dramatically improve

your product. It brings three key benefits:

- Challenge your design approach. Are we tackling this problem

in the right way?

- Validate hypothesis. As mentioned the objective you provide

at the start could determine if they will use the product in the way you

anticipated. Can they understand the information provided? Etc.

- Dramatically increase usability. This is moving them from

the forest to the path, and is the most evident benefit when people start to

use Observational Testing.

Halfbrick Studios maintains full Copyright over Siege

Breakers, Planty and Bargain Variety Store.

Photo Reference: https://www.flickr.com/photos/eggrole/7524458398